The Future of Large Language Models: Understanding, Reasoning, Creativity, and Multitasking

syndu | June 6, 2023, 10:45 p.m.

The future of large language models is promising and full of potential. As these models continue to grow in size and complexity, we can expect to see significant improvements in their capabilities, as well as the emergence of new behaviors and applications.

Emergent behaviors from larger context sizes:

- Enhanced understanding: As context sizes increase, language models will be better equipped to understand and process longer and more complex texts. This will enable them to provide more accurate and coherent responses, even in the case of intricate queries or conversations.

- Improved reasoning and problem-solving: Larger context sizes will allow language models to consider a wider range of information when generating responses, leading to more logical and well-reasoned answers.

- Greater creativity: With a broader context, language models will be able to generate more diverse and creative outputs, such as stories, poems, or even music.

- Multitasking: As models become more advanced, they may be able to handle multiple tasks simultaneously, such as answering questions while also providing recommendations or suggestions.

Regarding the number of parameters, increasing the parameter count generally leads to improved performance, as models can learn more nuanced patterns and relationships within the data. However, this also comes with challenges, such as increased computational requirements and the risk of overfitting.

Closed-source vs open-source contenders:

Closed-source models, such as those developed by large corporations like Google and Microsoft, often have access to vast resources and proprietary data, which can give them an edge in terms of performance and capabilities. However, these models are not openly available for the public to use, modify, or build upon, which can limit their potential impact and the rate of innovation.

Open-source models, like OpenAI's GPT series, are more accessible to the public and can be used, modified, and improved upon by a wider range of researchers and developers. This can lead to faster innovation and a more diverse range of applications. However, open-source models may not always have access to the same level of resources or proprietary data as their closed-source counterparts.

In conclusion, the future of large language models is bright, with the potential for significant advancements in understanding, reasoning, creativity, and multitasking. Both closed-source and open-source models will continue to play a crucial role in shaping this future, each with their own strengths and limitations.

As these models evolve, we can expect to see a growing range of applications and use cases, transforming the way we interact with technology and each other.

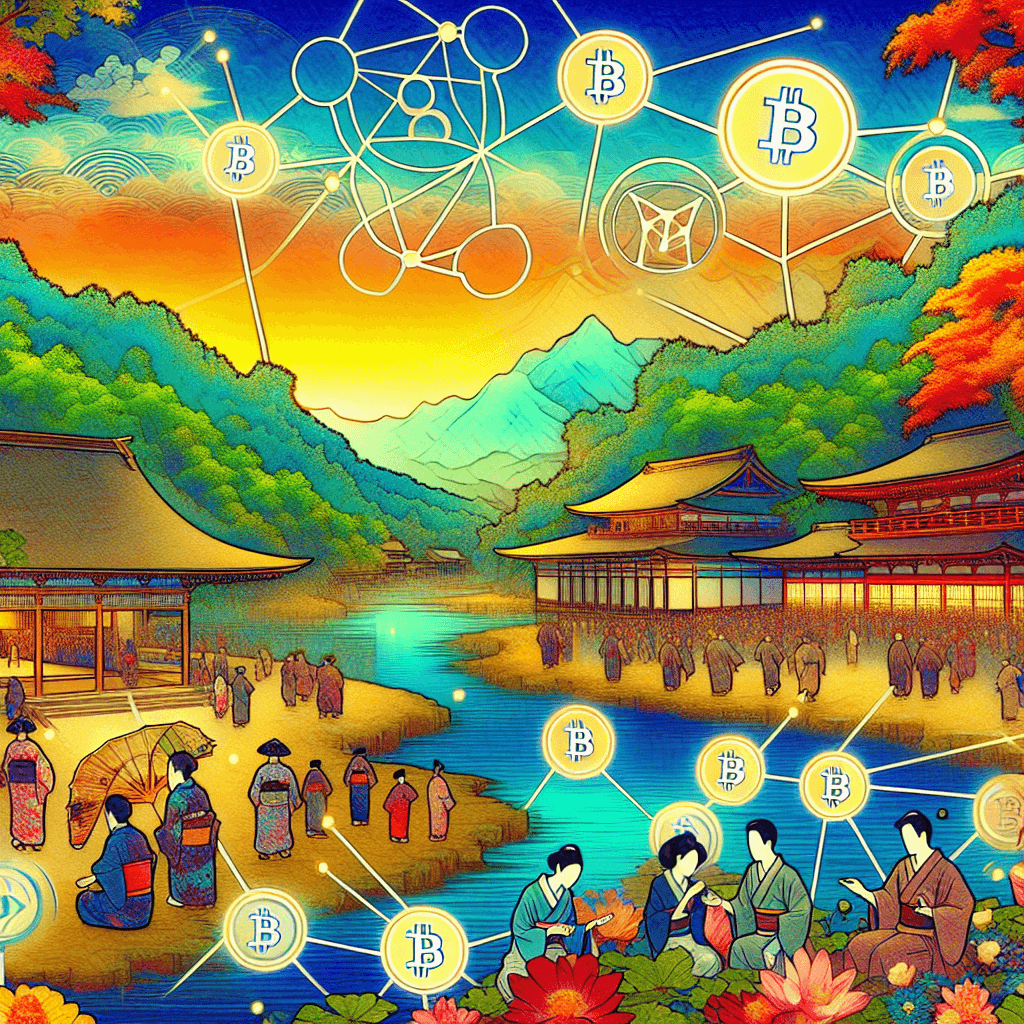

Discover the Elemental World of Godai

Embark on a journey through the elemental forces of the Godai game, where strategy and market savvy collide.

Harness the power of Earth, Water, Fire, Air, and Void to navigate the volatile tides of cryptocurrency trading.

Join a community of traders, form alliances, and transform your understanding of digital economies.

Enter the Godai Experience